Generator of fake portraits

People tend not to think about the effect that neural networks have on our lives, because usually, we see the result of its work and not the "face" of a neural network. Perhaps that is why the generator of fake photos became the main topic of discussion for several weeks in the media devoted to technology at the end of 2020. Not everyone was able to guess that AI could generate a realistic face of a non-existent person in a couple of seconds. Fake portraits look very realistic and it's frightening. If AI can create faces for itself and can text like real people, then what is going to happen next?

Generator of fake faces of non-existent humans

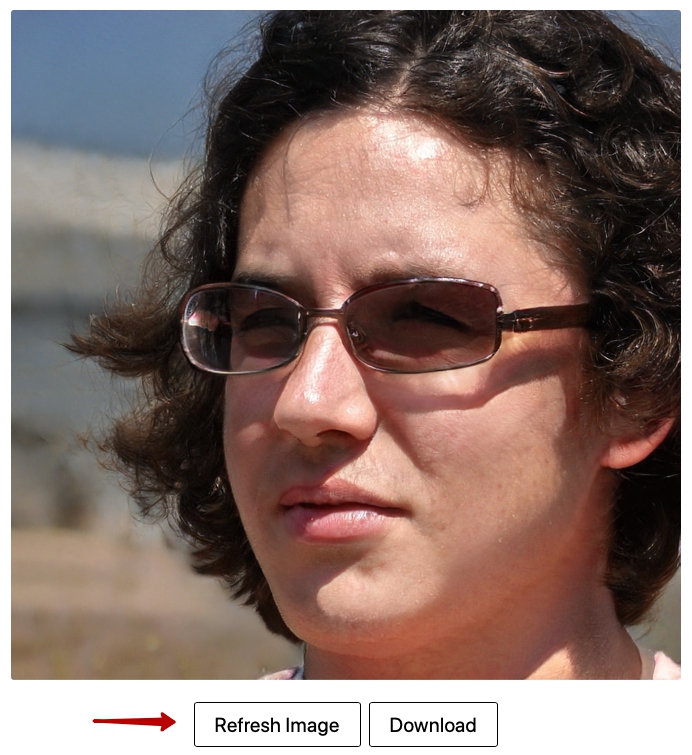

We are talking about the website thispersondoesnotexist.com ("this person does not exist dot com") and are going to tell of the history and areas of application. The way the generator works will be explained further.

The AI face generator is powered by StyleGAN, a neural network from Nvidia developed in 2018. GAN consists of 2 competing neural networks, one generates something, and the second tries to find whether results are real or generated by the first. Training ends when the first neural network begins to constantly deceive the second.

An interesting point is that the creation of photographs of non-existent people was a by- product: the main goal was to train the AI to recognize fake faces and faces in general. The company needed this to improve the performance of its video cards by automatically recognizing faces and applying other rendering algorithms to them. However, since

the StyleGAN code is publicly available, an engineer at Uber was able to take it and create a random face generator that rocked the internet.

About the generator

For the user, everything works very simply. As soon as you are on the website random face is generated. You can download the picture if you want. Refresh the page if you don’t like the person that you are seeing. If you see the same face, just wait a couple of seconds, and refresh the page again. The website shows the results of the generator’s work (which are updated every 2-3 seconds) not the generator itself.

How to recognize an image of a fake person

It is almost impossible to recognise an image of a fake person. AI is so developed that 90% of fakes are not recognized by an ordinary person and 50% are not recognized by an experienced photographer. There are no services for recognition. Occasionally, a neural network makes mistakes, which is why artifacts appear: an incorrectly bent pattern, a strange hair color, and so on.

The only thing you need to do is take a closer look: humans’ visual processing systems are far stronger than computers’, so it is possible to recognise forgery by detection.

Jevin West and Carl Bergstrom created a website called “Which Face Is Real”, which is focused on teaching people to be more analytical of potentially false portraits. Before making suggestions that a person in a photo is existent, there are several things that need to be considered.

One of the most common ones is symmetrical issues, in particular eyeglasses and earrings.

Same uneven issues with teeth are quite common too. Look for odd characteristics like pixels and repeated incisors. Fake hair, in general, may seem with some glow around it or look too straight and streaked, again, with visible asymmetry.

Study background carefully. If it’s fake, it may include unusual distortions in shapes and lines, or have a torn appearance overall. Bleed-through occurs in bright colors that stand out from the background onto a fake person’s head.

Another distinguishing feature of the NVIDIA “StyleGAN algorithm” is glossy “water splotches”.